Abstract

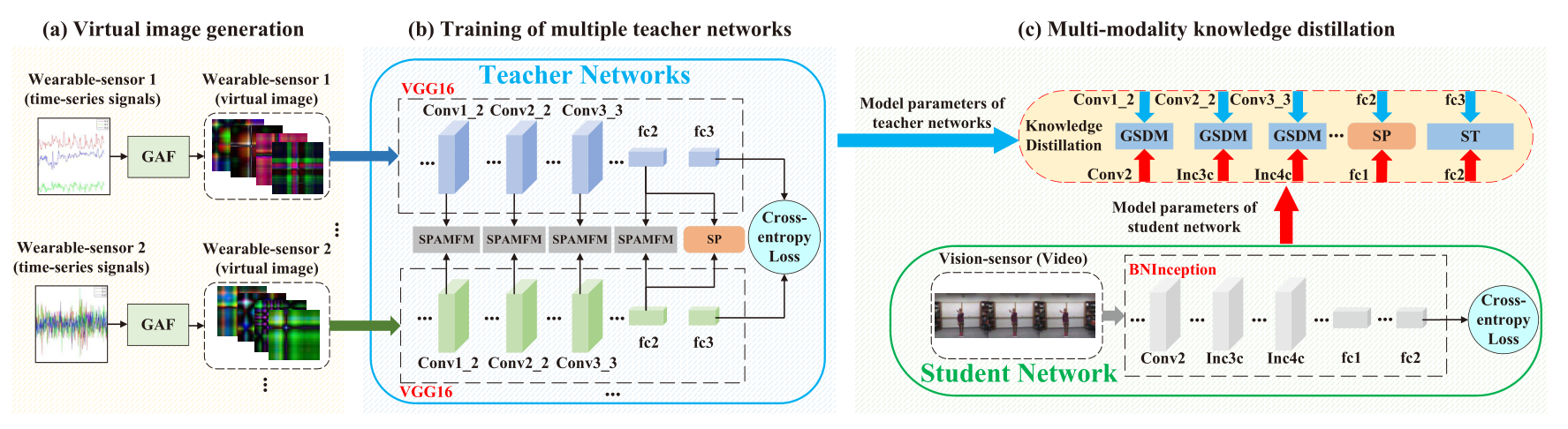

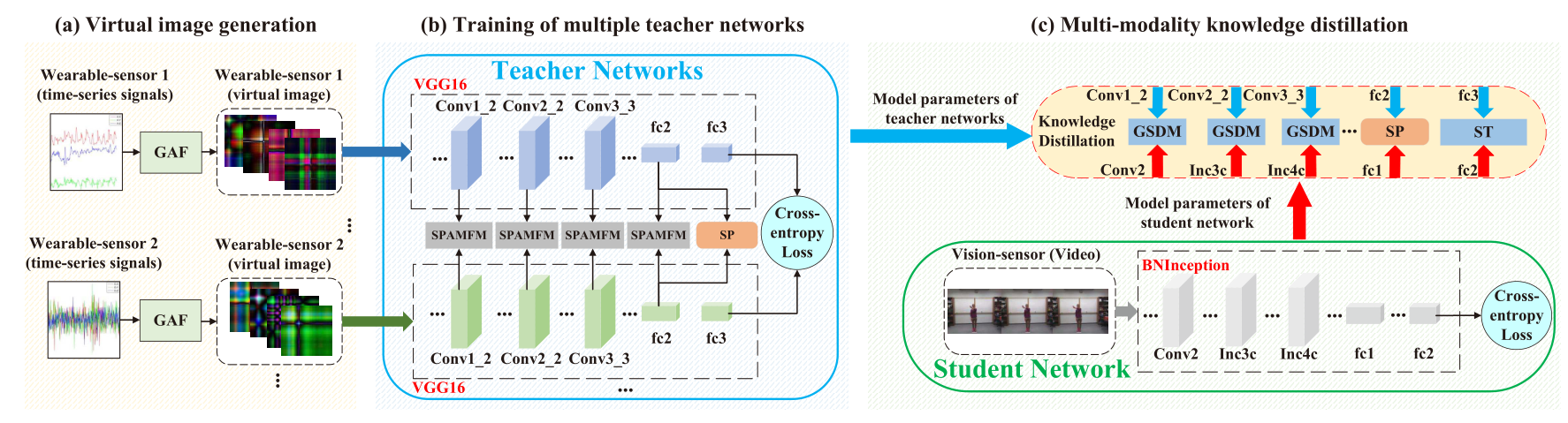

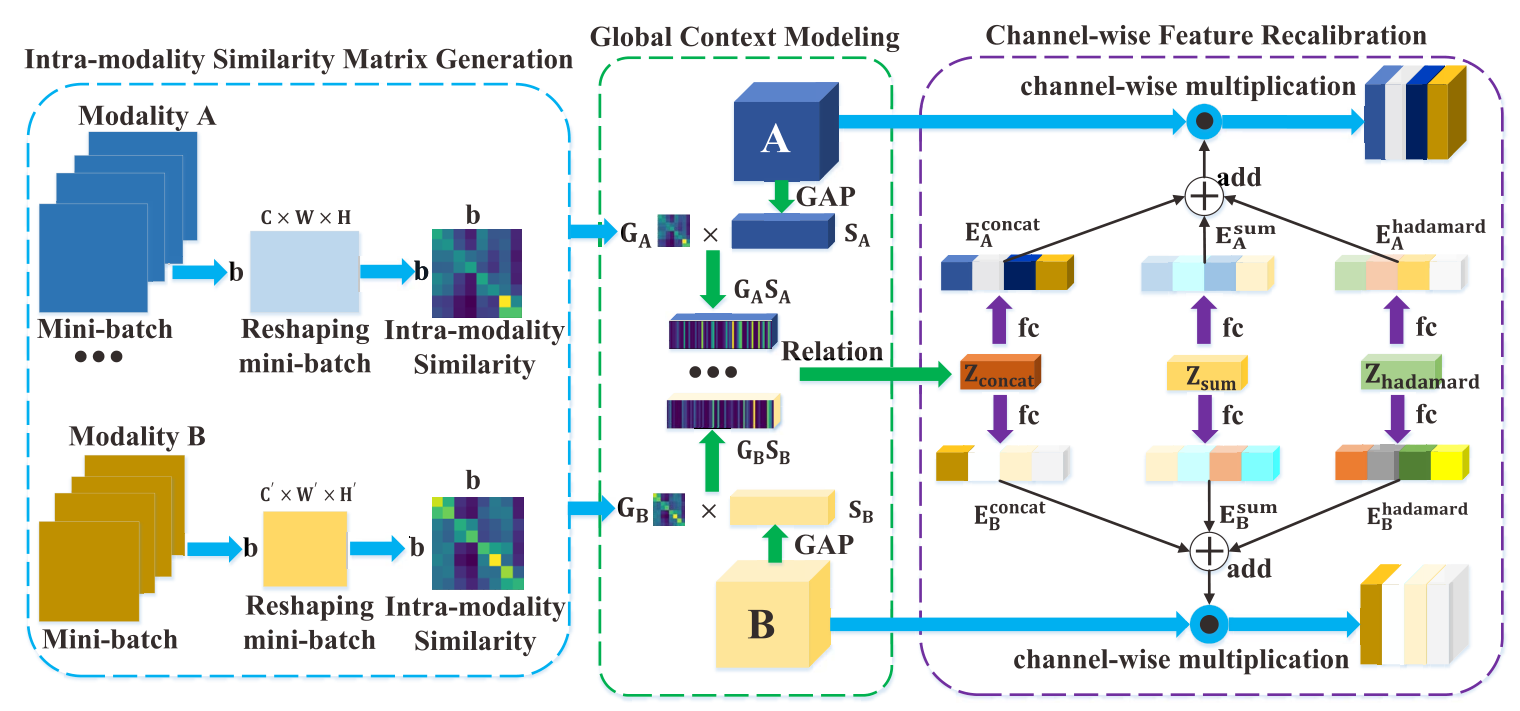

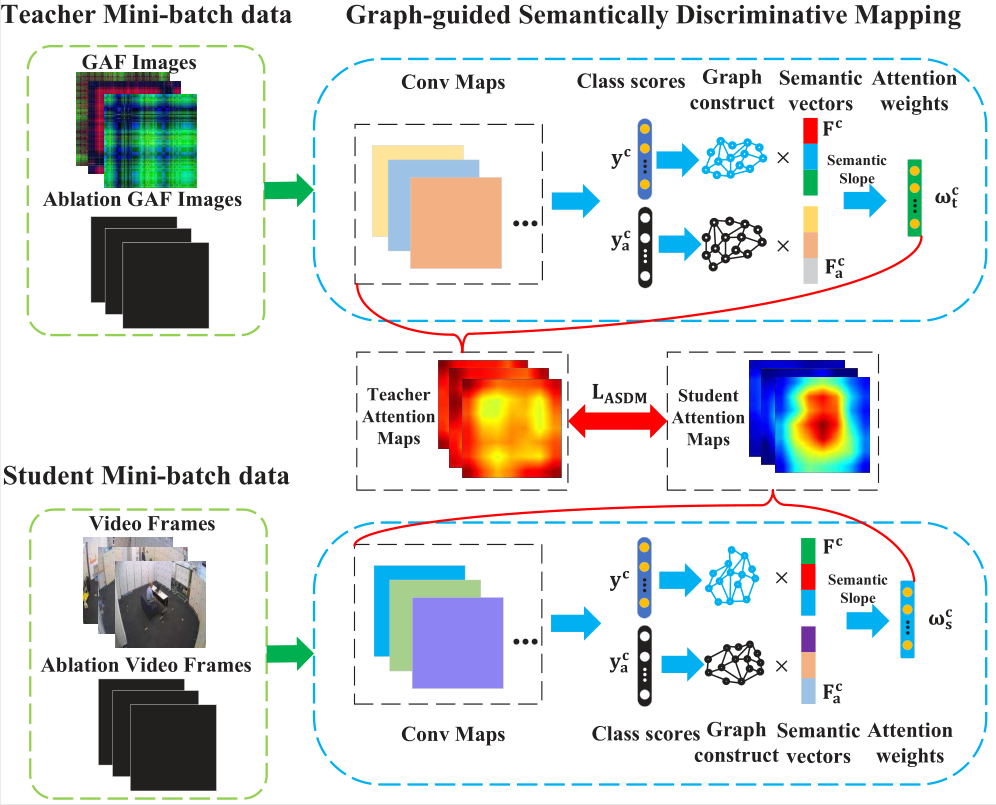

Existing vision-based action recognition is susceptible to occlusion and appearance variations, while wear-able sensors can alleviate these challenges by capturing human motion with one-dimensional time-series signals (e.g. acceleration, gyroscope, and orientation). For the same action, the knowledge learned from vision sensors (videos or images) and wearable sensors, may be related and complementary. However, there exists a significantly large modality difference between action data captured by wearable-sensor and vision-sensor in data dimension, data distribution, and inherent information content. In this paper, we propose a novel framework, named Semantics-aware Adaptive Knowledge Distillation Networks (SAKDN), to enhance action recognition in vision-sensor modality (videos) by adaptively transferring and distilling the knowledge from multiple wearable sensors. The SAKDN uses multiple wearable-sensors as teacher modalities and uses RGB videos as student modalities. To pre-serve the local temporal relationship and facilitate employing visual deep learning models, we transform one-dimensional time-series signals of wearable sensors to two-dimensional images by designing a gramian angular field based virtual image generation model. Then, we introduce a novel Similarity-Preserving Adaptive Multi-modal Fusion Module (SPAMFM) to adaptively fuse intermediate representation knowledge from different teacher networks. Finally, to fully exploit and transfer the knowledge of multiple well-trained teacher networks to the student network, we propose a novel Graph-guided Semantically Discriminative Mapping (GSDM) module, which utilizes graph-guided ablation analysis to produce a good visual explanation to highlight the important regions across modalities and concur-rently preserve the interrelations of original data. Experimental results on Berkeley-MHAD, UTD-MHAD, and MMAct datasets well demonstrate the effectiveness of our proposed SAKDN for adaptive knowledge transfer from wearable-sensors modalities to vision-sensors modalities. The code is publicly available at https://github.com/YangLiu9208/SAKDN.

Framework

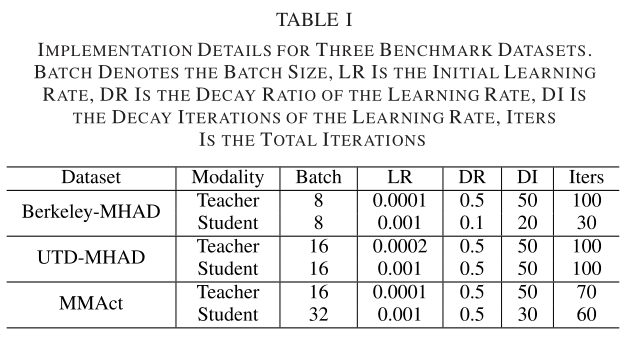

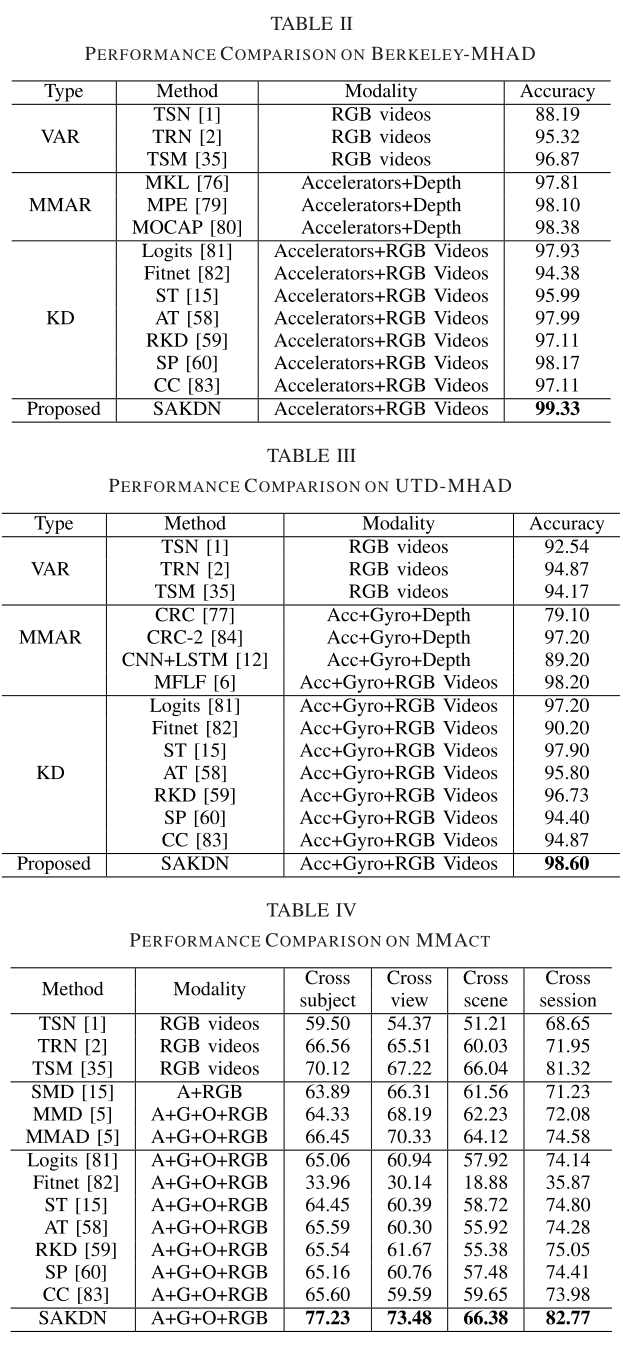

Experiment

Conclusion

In this paper, we propose an end-to-end knowledge distilla-tion framework, named Semantics-aware Adaptive Knowledge Distillation Networks (SAKDN), to adaptively distill the com-plementary knowledge from multiple wearable-sensors (teach-ers) to the vision-sensor (student), and concurrently improve the action recognition performance in vision-sensor modality (videos). To fully utilize the complementary knowledge from multiple teachers, we propose a novel plug-and-play module, named Similarity-Preserving Adaptive Multi-modal Fusion Module (SPAMFM), which integrates intra-modality similar-ity, semantic embeddings, and multiple relational knowledge to learn the global context representation and recalibrate the channel-wise features adaptively in each teacher network. To effectively exploit and transfer the knowledge of multiple well-trained teachers to the student, we propose a novel knowl-edge distillation module, named Graph-guided Semantically Discriminative Mapping (GSDM), which utilizes graph-guided ablation analysis to produce a visual explanation highlighting the important regions for predicting the semantic concept, and concurrently preserving respective interrelations of data. Extensive experiments on three benchmarks demonstrate the effectiveness of our SAKDN for adaptive knowledge transfer from wearable-sensors to vision-sensors.