Abstract

Facial expression recognition (FER) has received significant attention in the past decade with witnessed progress, but data inconsistencies among different FER datasets greatly hinder the generalization ability of the models learned on one dataset to another. Recently, a series of cross-domain FER algorithms (CD-FERs) have been extensively developed to address this issue.

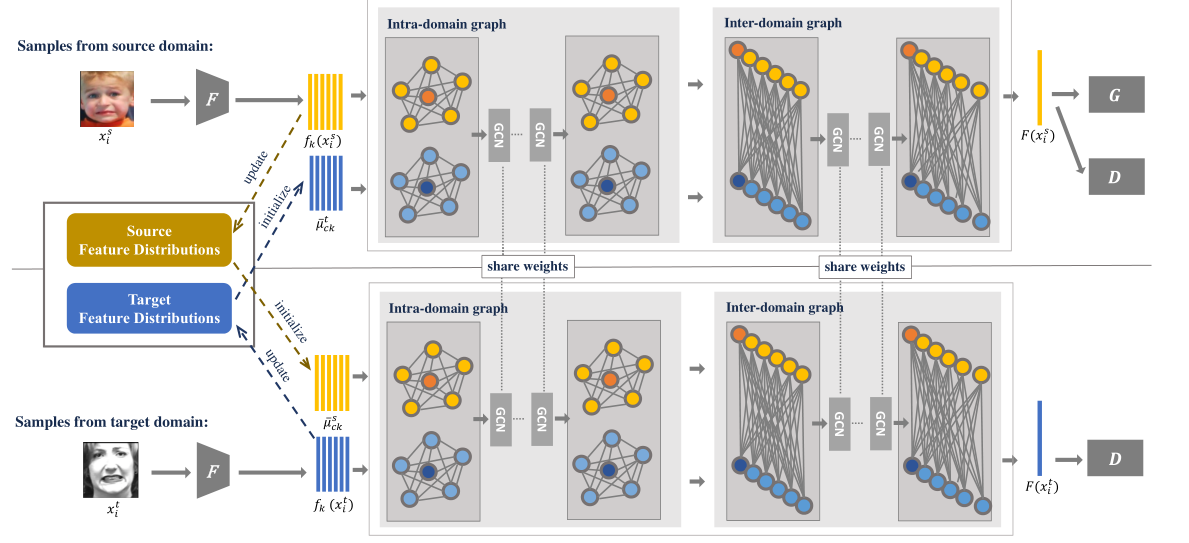

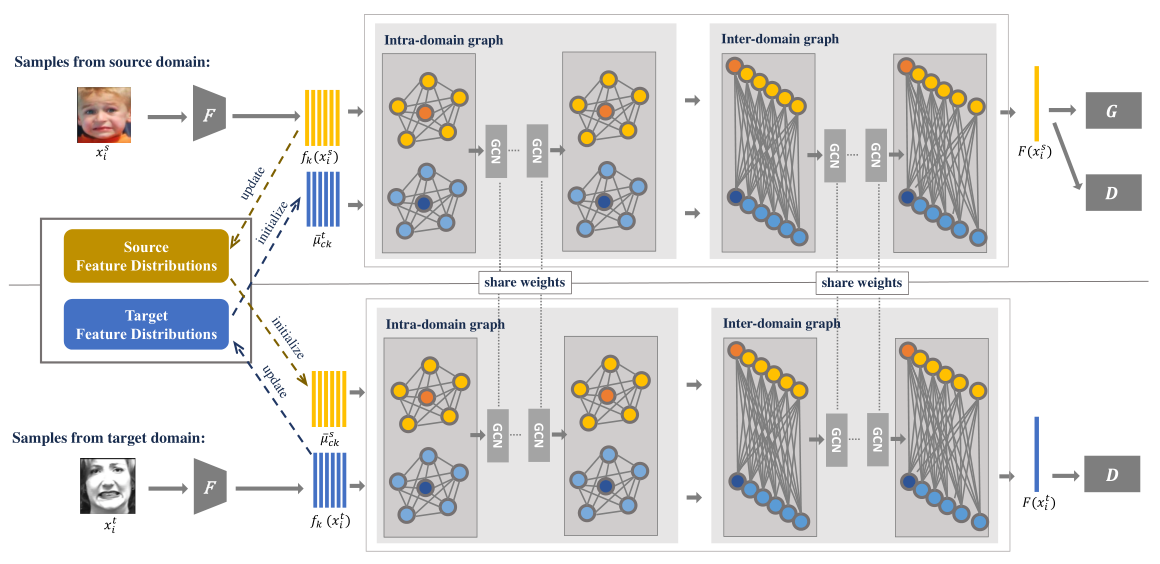

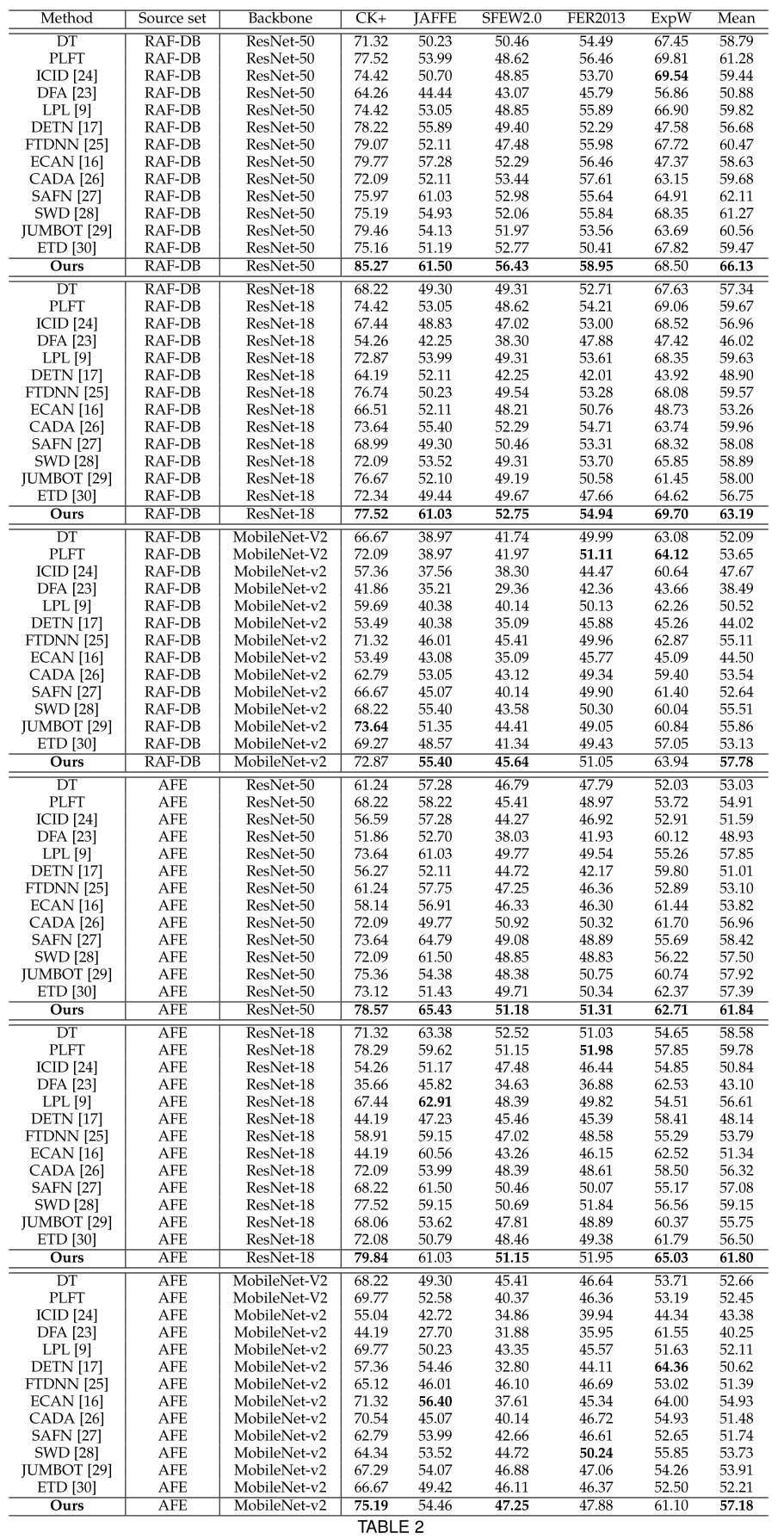

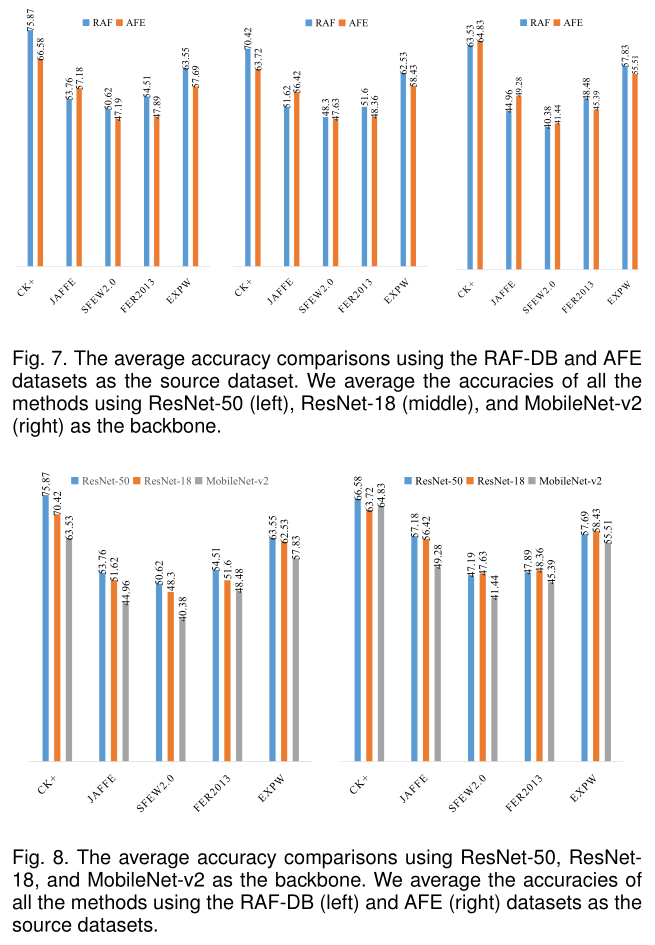

Although each declares to achieve superior performance, comprehensive and fair comparisons are lacking due to inconsistent choices of the source/target datasets and feature extractors. In this work, we first propose to construct a unified CD-FER evaluation benchmark, in which we re-implement the well-performing CD-FER and recently published general domain adaptation algorithms and ensure that all these algorithms adopt the same source/target datasets and feature extractors for fair CD-FER evaluations. Based on the analysis, we find that most of the current state-of-the-art algorithms use adversarial learning mechanisms that aim to learn holistic domain-invariant features to mitigate domain shifts. However, these algorithms ignore local features, which are more transferable across different datasets and carry more detailed content for fine-grained adaptation. Therefore, we develop a novel adversarial graph representation adaptation (AGRA) framework that integrates graph representation propagation with adversarial learning to realize effective cross-domain holistic-local feature co-adaptation. Specifically, our framework first builds two graphs to correlate holistic and local regions within each domain and across different domains, respectively. Then, it extracts holistic-local features from the input image and uses learnable per-class statistical distributions to initialize the corresponding graph nodes. Finally, two stacked graph convolution networks (GCNs) are adopted to propagate holistic-local features within each domain to explore their interaction and across different domains for holistic-local feature co-adaptation. In this way, the AGRA framework can adaptively learn fine-grained domain-invariant features and thus facilitate cross-domain expression recognition. We conduct extensive and fair comparisons on the unified evaluation benchmark and show that the proposed AGRA framework outperforms previous state-of-the-art methods.

Framework

Experiment

Conclusion

In this work, we first analyze the inconsistent choices of the source/target datasets and feature extractors and their performance effect on the CD-FER task. Then, we con-struct a unified evaluation CD-FER benchmark, in which all the competing methods are compared fairly with unified source/target datasets and feature extractors. A new AFE dataset is also built and added to the benchmark. In ad-dition, based on the observation that current leading CD-FER methods mainly focus on learning holistic domain-invariant features but ignore local features that are more transferable and carry more detailed content, we develop a novel AGRA framework that integrates the graph prop-agation mechanism with adversarial learning for effective holistic-local representation co-adaptation across different domains. In the experiments, we use the unified evaluation benchmark to compare the proposed AGRA framework with current state-of-the-art methods, which demonstrates the effectiveness of the proposed framework.