Abstract

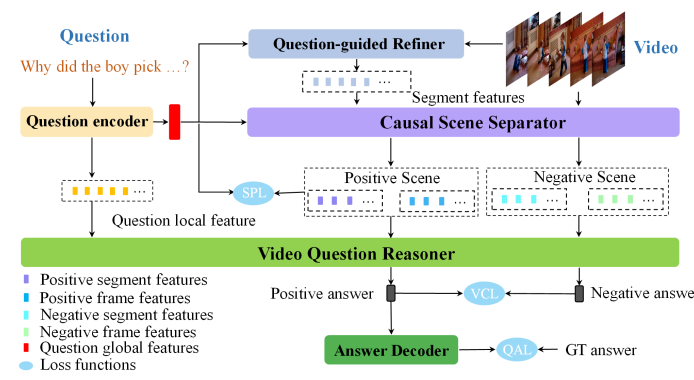

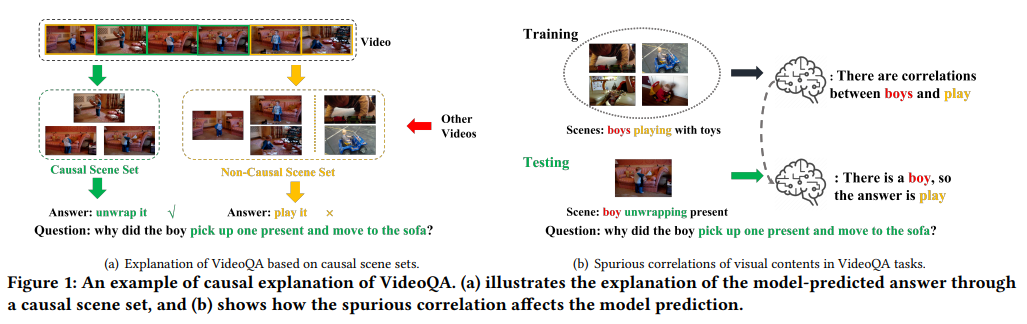

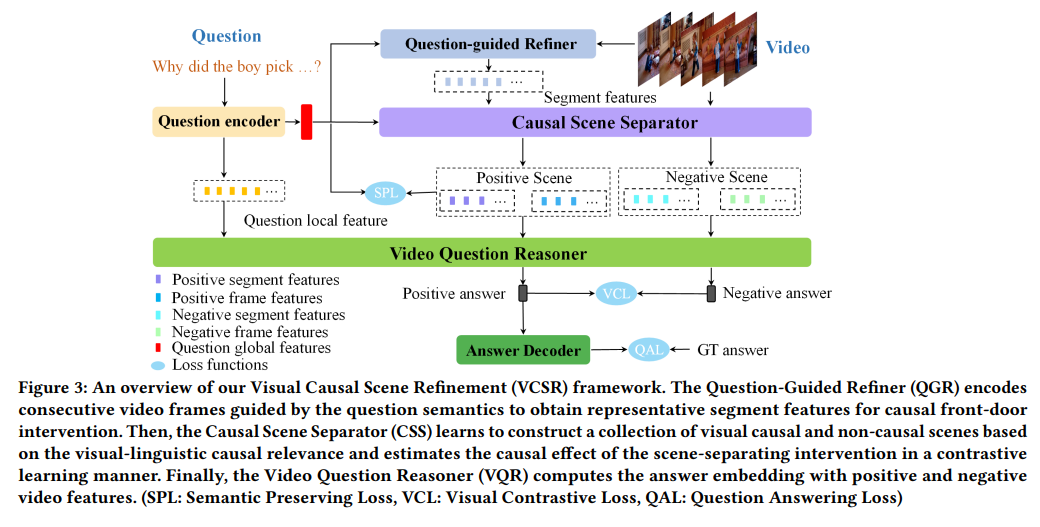

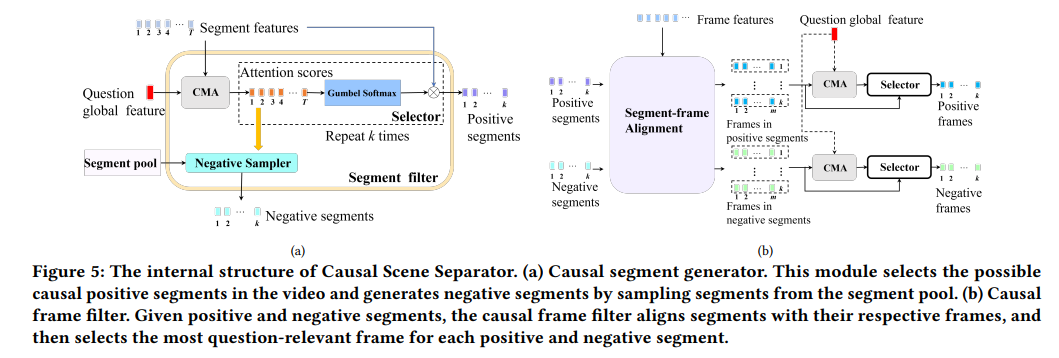

Existing methods for video question answering (VideoQA) often suffer from spurious correlations between different modalities, leading to a failure in identifying the dominant visual evidence and the intended question. Moreover, these methods function as black boxes, making it difficult to interpret the visual scene during the QA process. In this paper, to discover critical video segments and frames that serve as the visual causal scene for generating reliable answers, we present a causal analysis of VideoQA and propose a framework for cross-modal causal relational reasoning, named Visual Causal Scene Refinement (VCSR). Particularly, a set of causal front door intervention operations is introduced to explicitly find the visual causal scenes at both segment and frame levels. Our VCSR in volves two essential modules: i) the Question-Guided Refiner (QGR) module, which refines consecutive video frames guided by the question semantics to obtain more representative segment features for causal front-door intervention; ii) the Causal Scene Separator (CSS) module, which discovers a collection of visual causal and non-causal scenes based on the visual-linguistic causal relevance and estimates the causal effect of the scene-separating intervention in a contrastive learning manner. Extensive experiments on the NExT-QA, Causal-VidQA, and MSRVTT-QA datasets demonstrate the superiority of our VCSR in discovering visual causal scene and achieving robust video question answering. The code is available at https://github.com/YangLiu9208/VCSR.

Framework

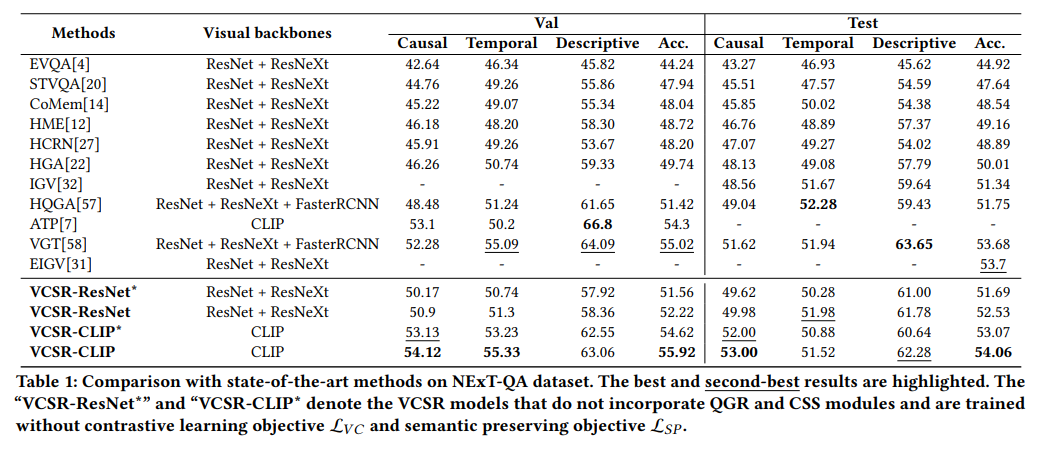

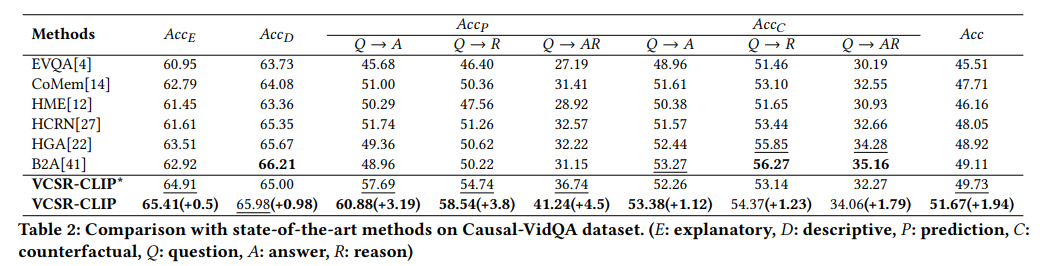

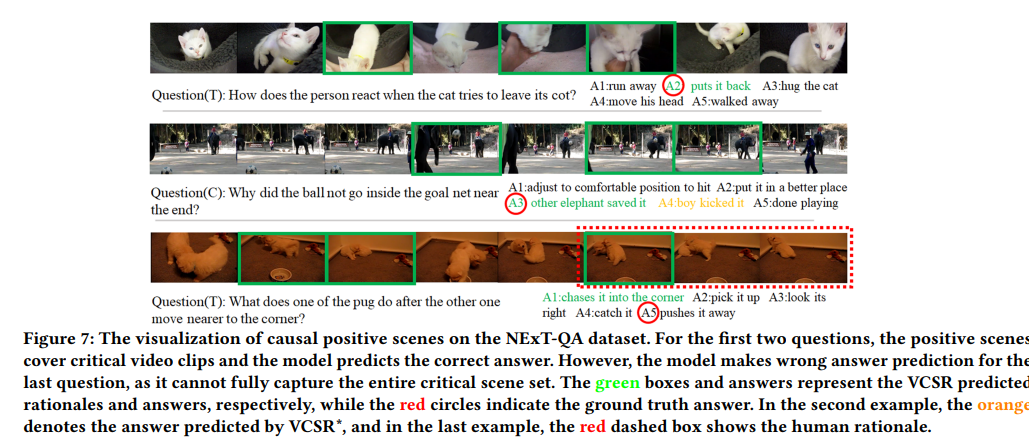

Experiment

Conclusion

In this paper, we propose a cross-modal causal relational reasoning framework named VCSR for VideoQA, to explicitly discover the visual causal scenes through causal front-door interventions. From the perspective of causality, we model the causal effect between video-question pairs and the answer based on the structural causal model (SCM). To obtain representative segment features for front-door intervention, we introduce the Question-Guided Refiner (QGR) module. To identify visual causal and non-causal scenes, we propose the Causal Scene Separator (CSS) module. Extensive experiments on three benchmarks demonstrate the superiority of VCSR over the state-of-the-art methods. We believe our work could inspire more causal analysis research in vision-language tasks.

Acknowledgement

This work is supported by the National Key R&D Program of China under Grant 2021ZD0111601, in part by the National Natural Science Foundation of China under Grants 62002395 and 61976250, in part by the Guangdong Basic and Applied Basic Research Foundation under Grants 2023A1515011530, 2021A1515012311, and 2020B15 15020048, and in part by the Guangzhou Science and Technology Planning Project under Grant 2023A04J2030.