Abstract

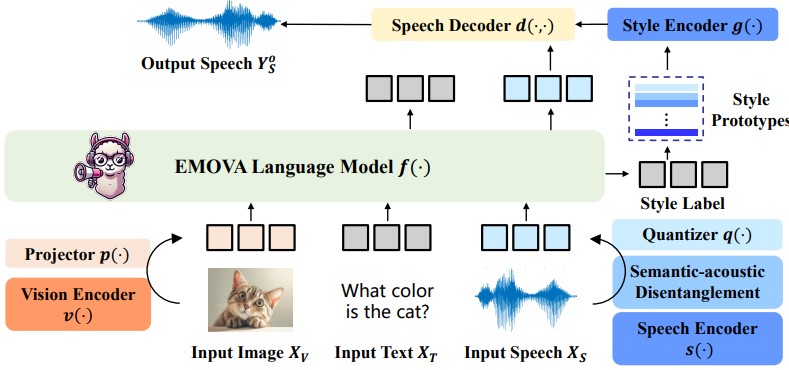

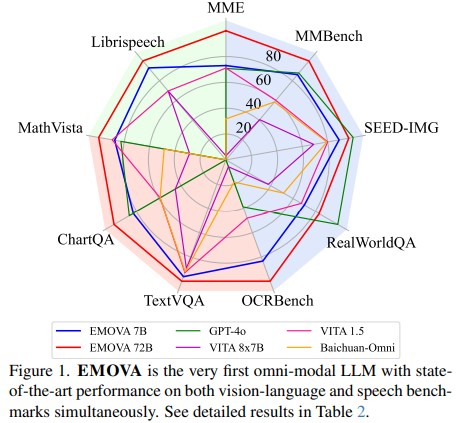

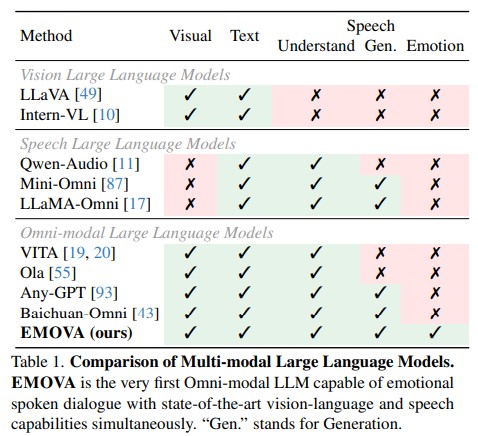

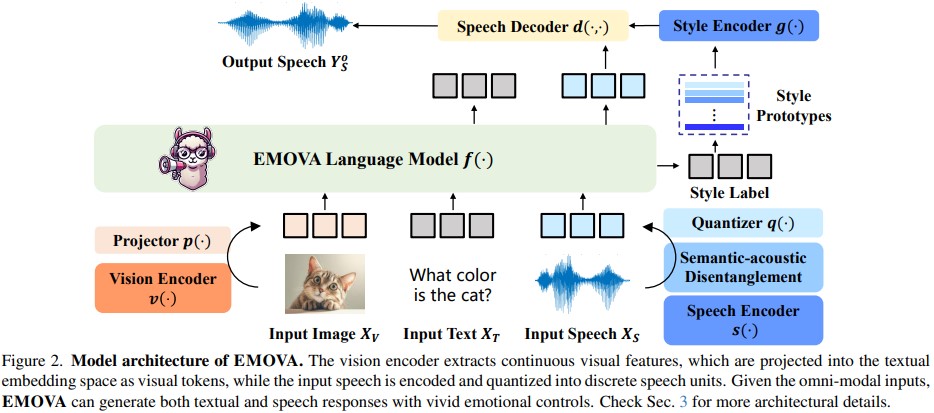

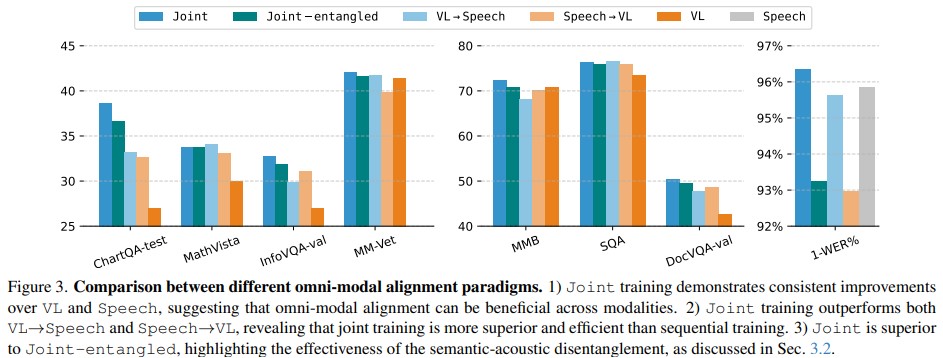

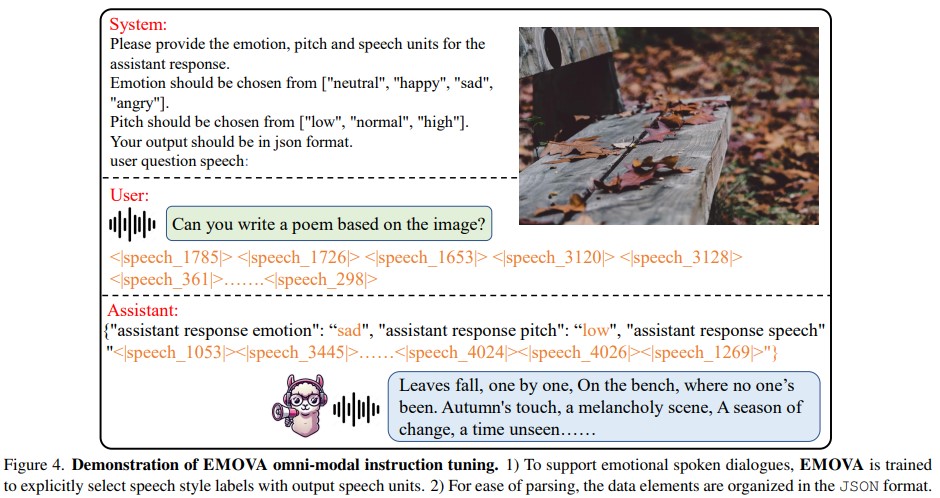

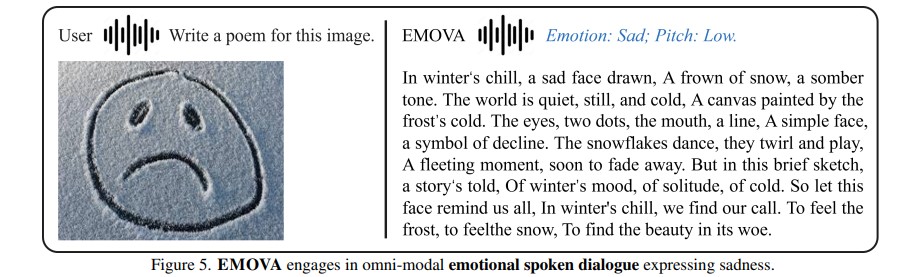

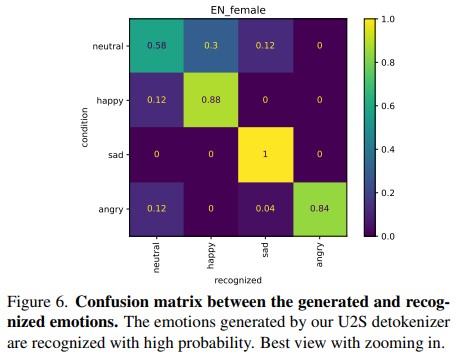

GPT-4o, an omni-modal model that enables vocal conversations with diverse emotions and tones, marks a milestone for omni-modal foundation models. However, empowering Large Language Models to perceive and generate images, texts, and speeches end-to-end with publicly available data remains challenging for the open-source community. Existing vision-language models rely on external tools for speech processing, while speech-language models still suffer from limited or totally without vision-understanding capabilities. To address this gap, we propose the EMOVA (EMotionally Omni-present Voice Assistant), to enable Large Language Models with end-to-end speech abilities while maintaining the leading vision-language performance. With a semantic-acoustic disentangled speech tokenizer, we surprisingly notice that omni-modal alignment can further enhance vision-language and speech abilities compared with the bi-modal aligned counterparts. Moreover, a lightweight style module is introduced for the flexible speech style controls including emotions and pitches. For the first time, EMOVA achieves state-of-the-art performance on both the vision-language and speech benchmarks, and meanwhile, supporting omni-modal spoken dialogue with vivid emotions.

Framework

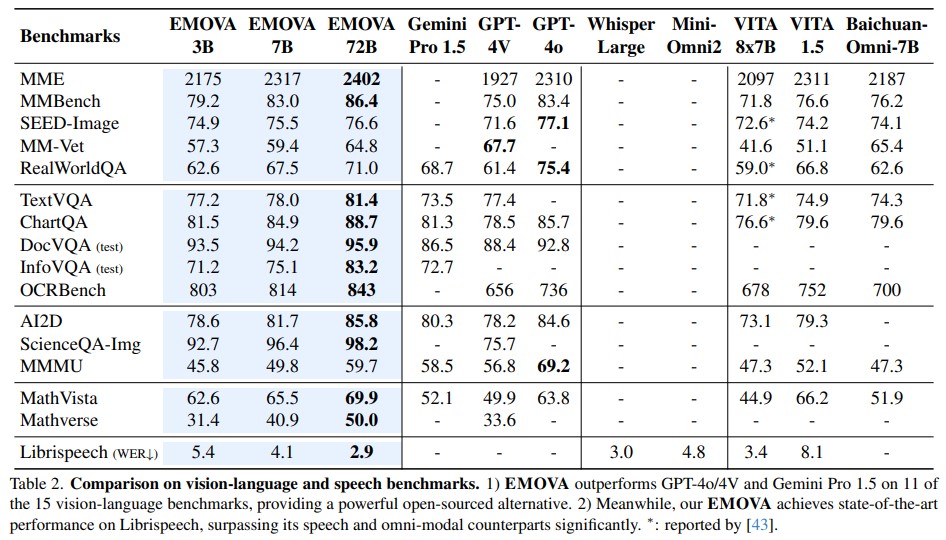

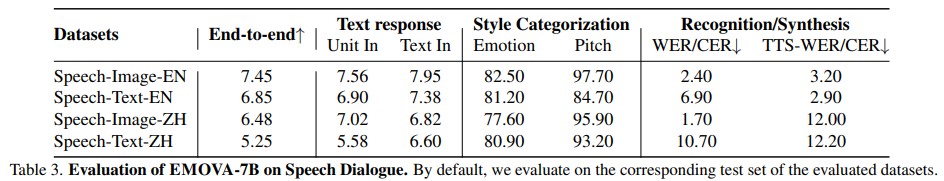

Experiment

Conclusion

Our work builds EMOVA, a novel end-to-end omni-modal large language model that effectively aligns vision, speech, and text simultaneously. With text as a bridge, we show that omni-modal alignment is achievable without relying on omni-modal image-text-speech data, meanwhile, enhancing both vision-language and speech abilities. For the first time, EMOVA achieves state-of-the-art performance on both vision-language and speech benchmarks, setting a new standard for versatile omni-modal interactions.