Abstract

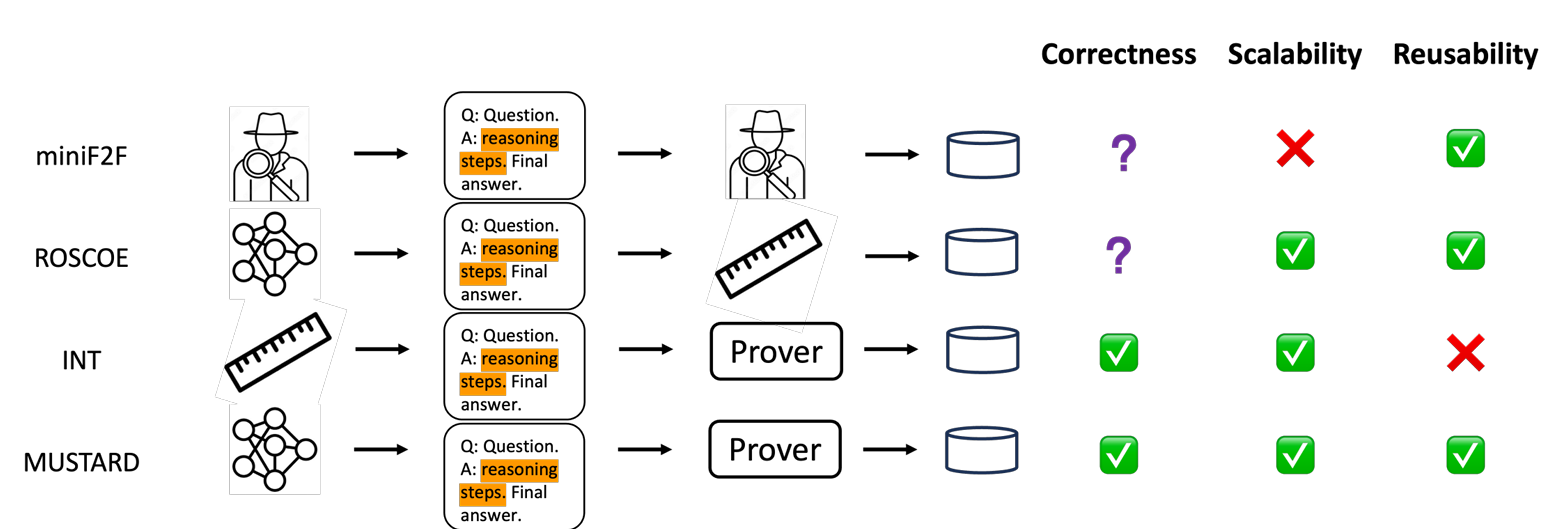

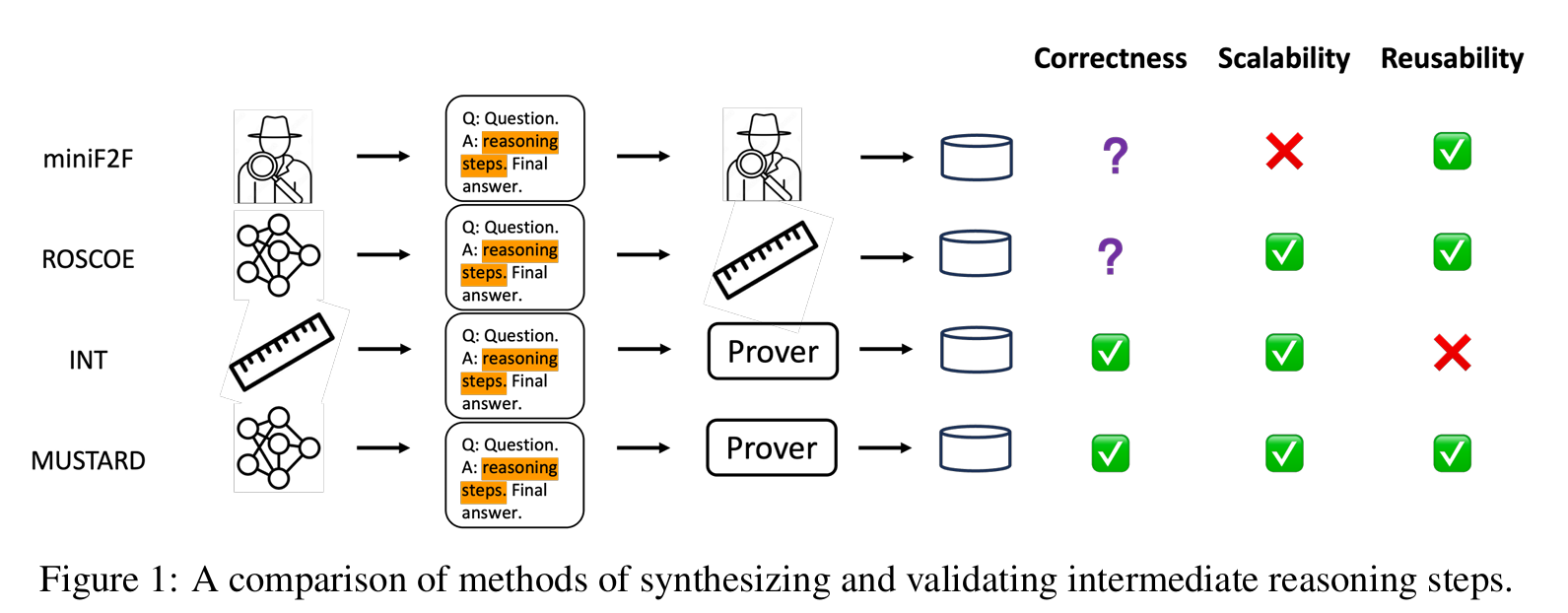

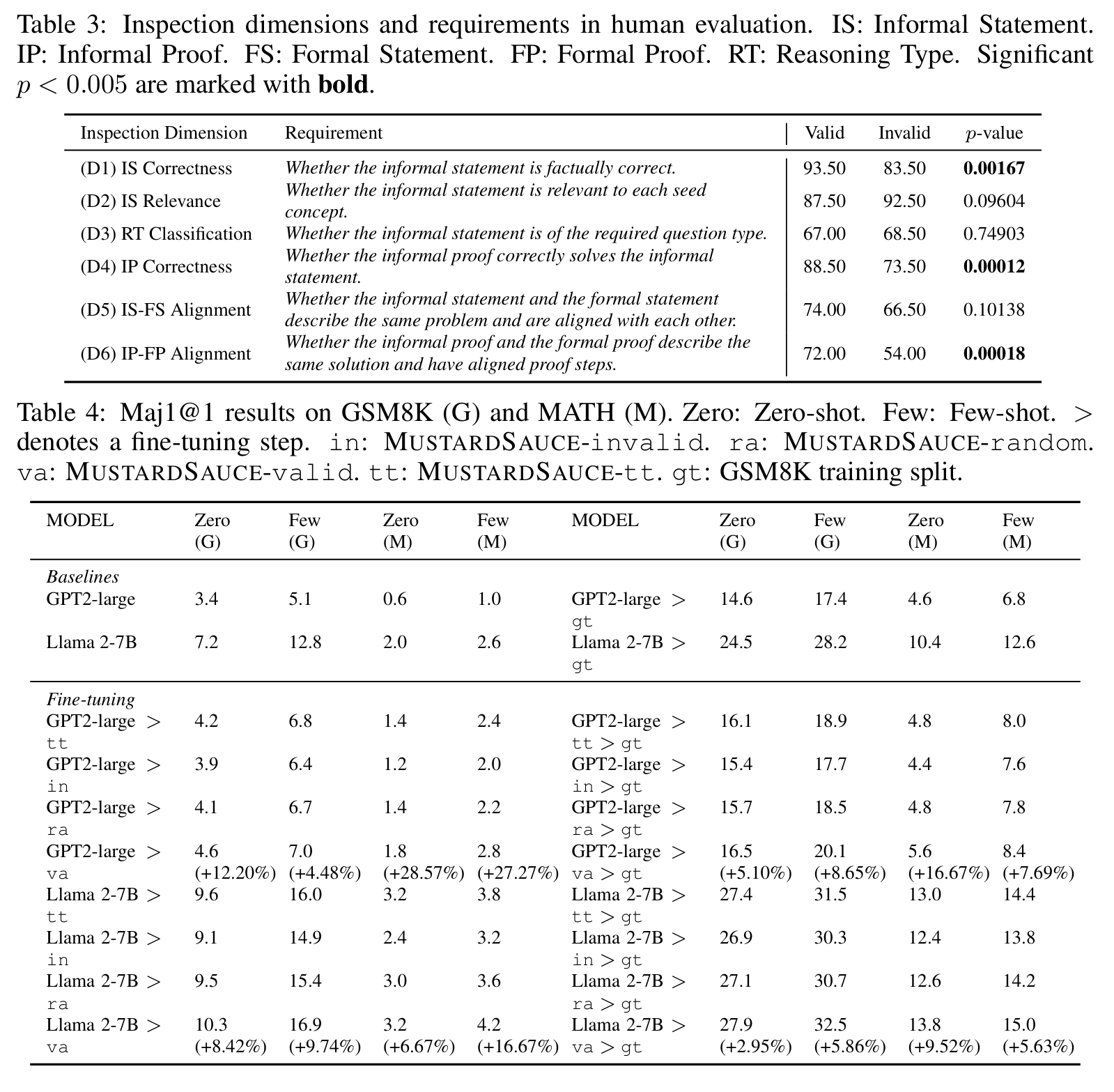

Recent large language models (LLMs) have witnessed significant advancement in various tasks, including mathematical reasoning and theorem proving. As these two tasks require strict and formal multi-step inference, they are appealing do mains for exploring the reasoning ability of LLMs but still face important chal lenges. Previous studies such as Chain-of-Thought (CoT) have revealed the ef fectiveness of intermediate steps guidance. However, such step-wise annotation requires heavy labor, leading to insufficient training steps for current benchmarks. To fill this gap, this work introduces MUSTARD, a data generation framework that masters u niform synthesis of theorem and proof data of high quality and diversity. MUSTARD synthesizes data in three stages: (1) It samples a few math ematical concept seeds as the problem category. (2) Then, it prompts a genera tive language model with the sampled concepts to obtain both the problems and their step-wise formal solutions. (3) Lastly, the framework utilizes a proof assis tant (e.g., Lean Prover) to filter the valid proofs. With the proposed MUSTARD, we present a theorem-and-proof benchmark MUSTARDSAUCE with 5,866 valid data points. Each data point contains an informal statement, an informal proof, and a translated formal proof that passes the prover validation. We perform ex tensive analysis and demonstrate that MUSTARD generates validated high-quality step-by-step data. We further apply the MUSTARDSAUCE for fine-tuning smaller language models. The fine-tuned Llama 2-7B achieves a 15.41% average rela tive performance gain in automated theorem proving, and 8.18% in math word problems.

Framework

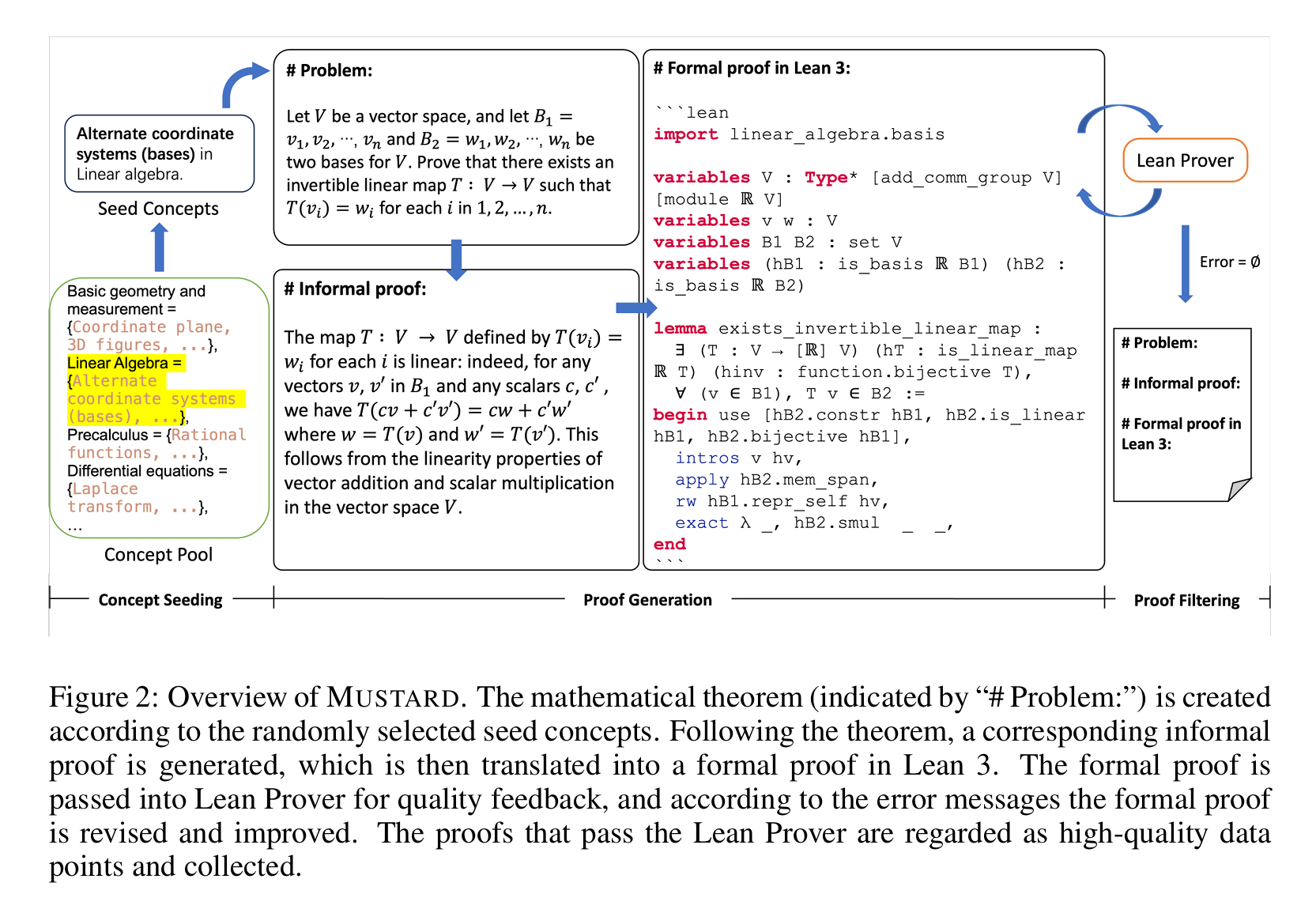

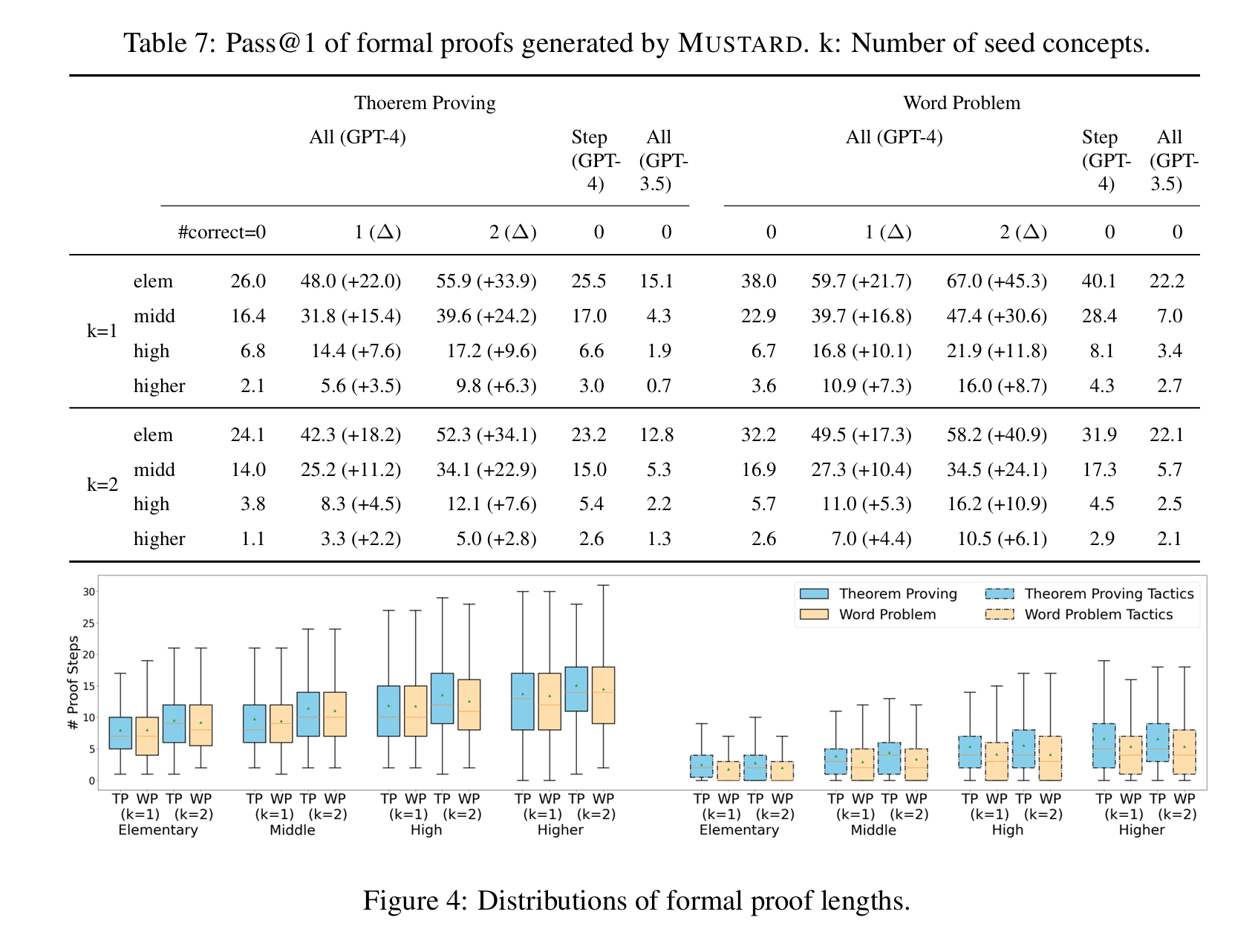

Experiment

Conclusion

Inthispaper,weintroduceMUSTARDtoautomaticallygeneratemathematicaldatasetswithhigh qualitysolutionsthatcoveravarietyofmathematicalskills.LeveragingtheLLMandLeanProver, MUSTARDcangenerate theproblemstatement, informal solution, andformal solution. anduse aLeanProver toautomaticallyverifytheformal solutionandprovidefeedbackfor revision. At last,weapplytheproposedMUSTARDandobtain5,866problemswithstep-by-stepsolutionsthat coverdifferenteducational levelsandmathematicalabilities. Theobtaineddatasethasshownits highquality, diversity, andeffectiveness inimprovinglanguagemodels’mathematical reasoning performance,showingthegreatpotentialofourproposedMUSTARDanddatasetinfurtherresearch onlanguagemodels.