Abstract

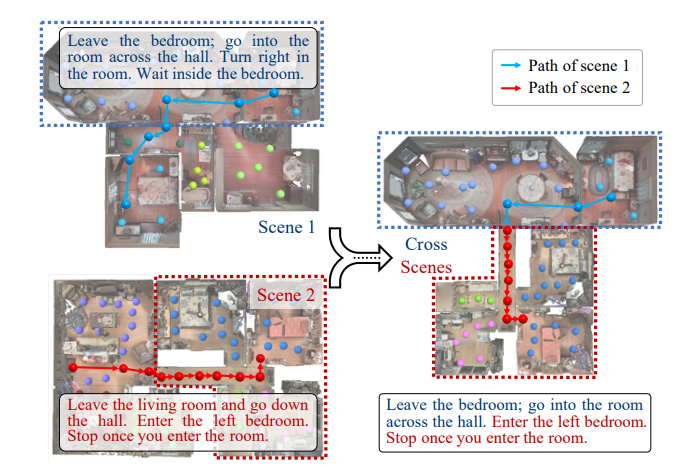

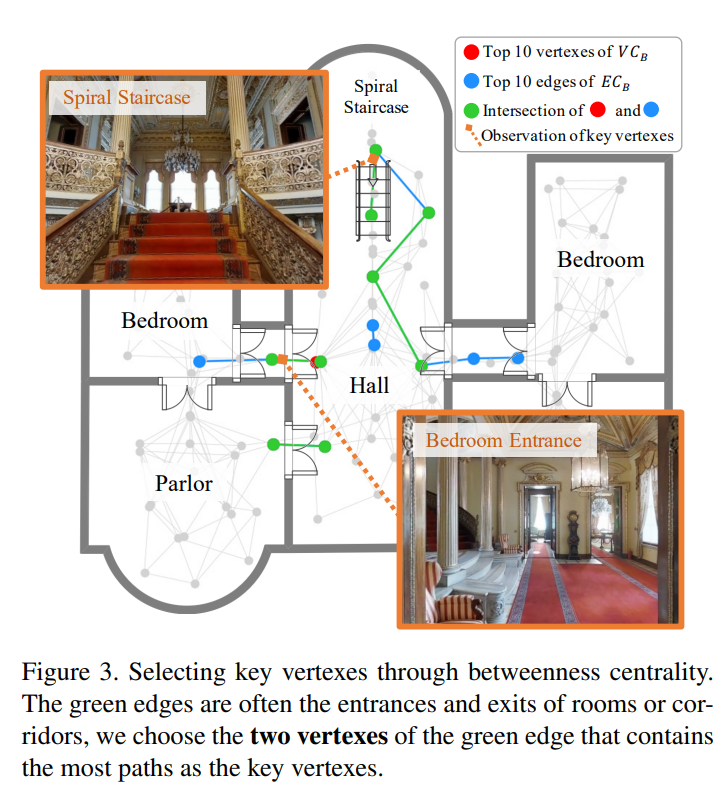

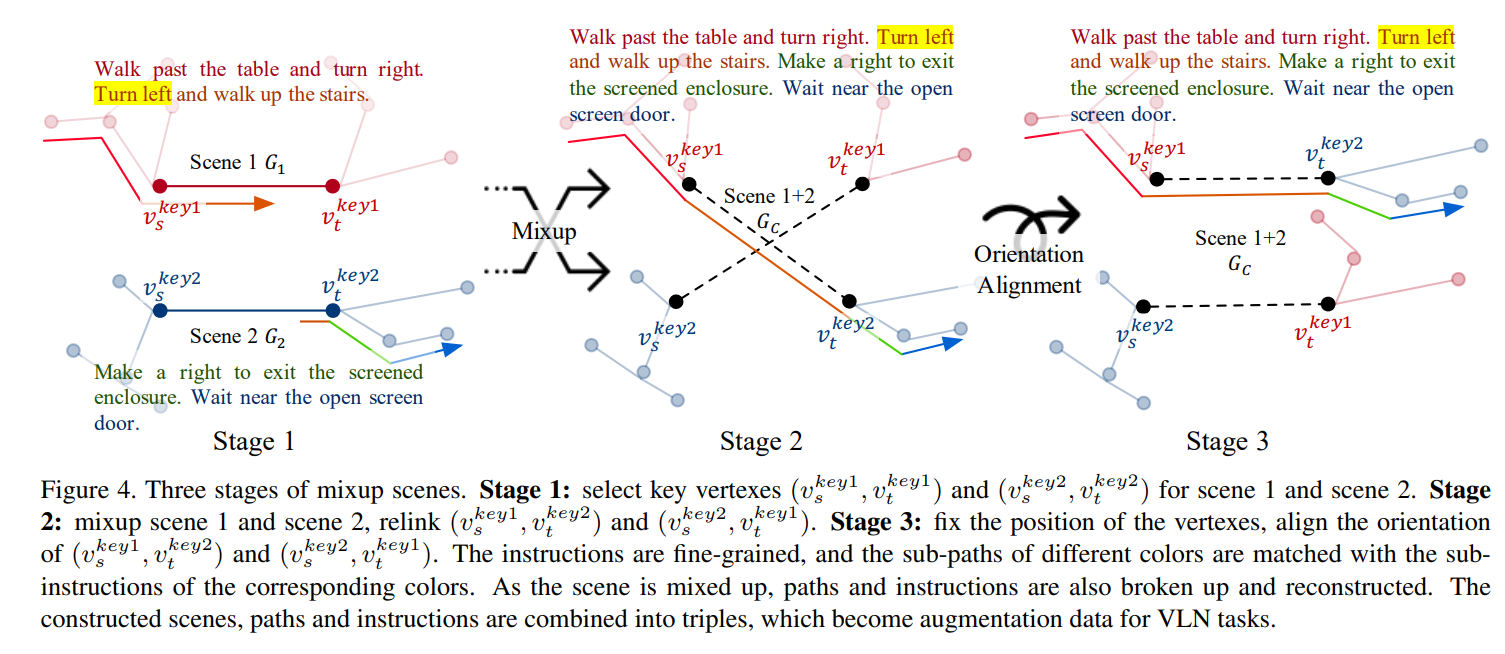

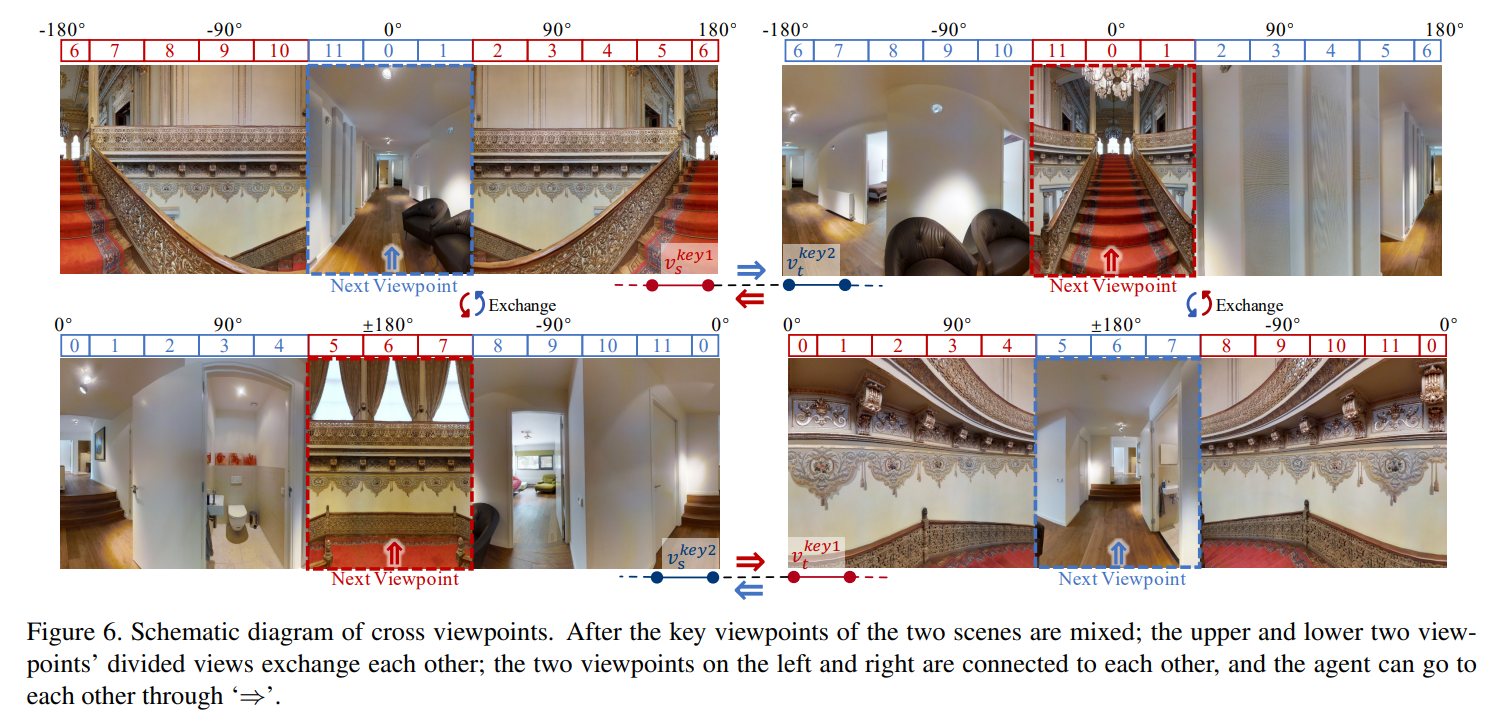

Vision-language Navigation (VLN) tasks require an agent to navigate step-by-step while perceiving the visual observations and comprehending a natural language instruction. Large data bias, which is caused by the disparity ratio between the small data scale and large navigation space, makes the VLN task challenging. Previous works have proposed various data augmentation methods to reduce data bias. However, these works do not explicitly reduce the data bias across different house scenes. Therefore, the agent would overfit to the seen scenes and achieve poor navigation performance in the unseen scenes. To tackle this problem, we propose the Random Environmental Mixup (REM) method, which generates cross-connected house scenes as augmented data via mixuping environment. Specifically, we first select key viewpoints according to the room connection graph for each scene. Then, we cross-connect the key views of different scenes to construct augmented scenes. Finally, we generate augmented instruction-path pairs in the cross-connected scenes. The experimental results on benchmark datasets demonstrate that our augmentation data via REM help the agent reduce its performance gap between the seen and unseen environment and improve the overall performance, making our model the best existing approach on the standard VLN benchmark. The code have released: https://github.com/LCFractal/VLNREM.

Framework

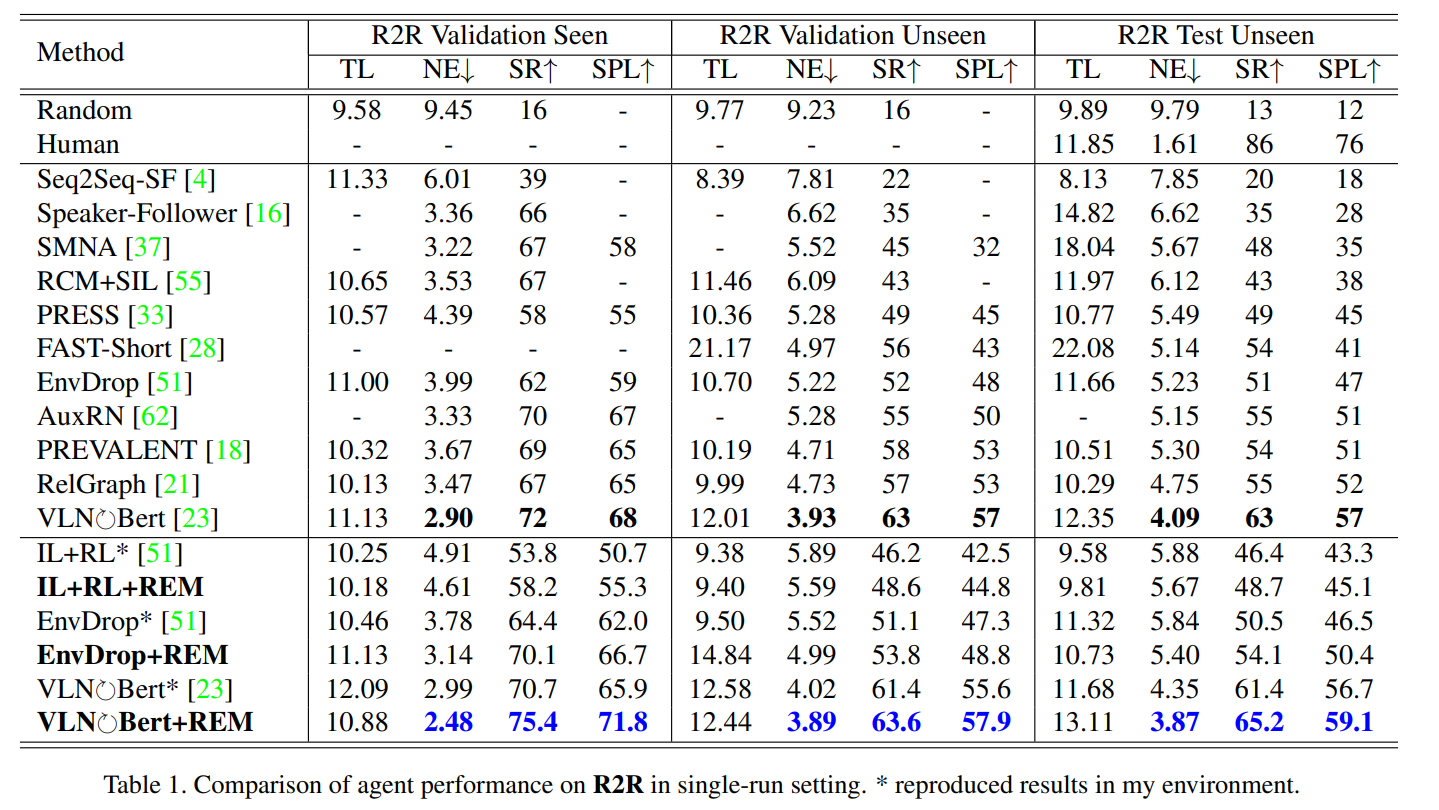

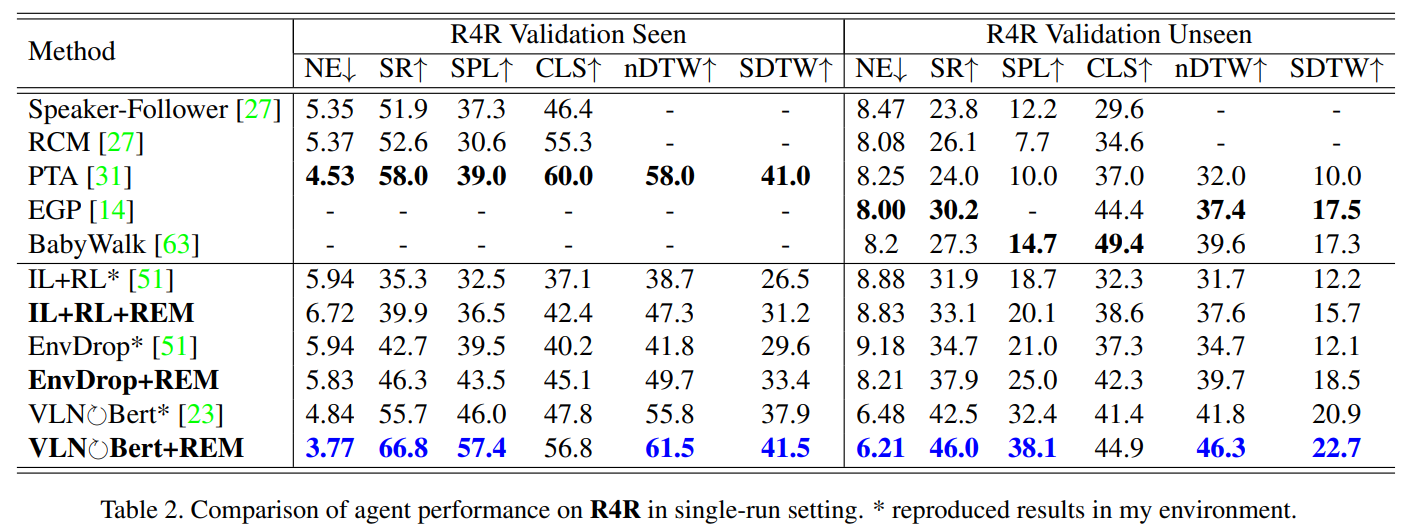

Experiment

Conclusion

In this paper, we analyze the factors that affect generalization ability and put forward the assumption that inter-scene data augmentation can more effectively reduce generalization errors. We accordingly propose the Random Environmental Mixup (REM) method, which generates cross-connected house scenes as augmented data via mixuping environment. The experimental results on benchmark datasets demonstrate that REM can significantly reduce the performance gap between seen and unseen environments. Moreover, REM dramatically improves the overall navigation performance. Finally, the ablation analysis verifies our assumption pertaining to the reduction of generalization errors.

Acknowledgement

References