Abstract

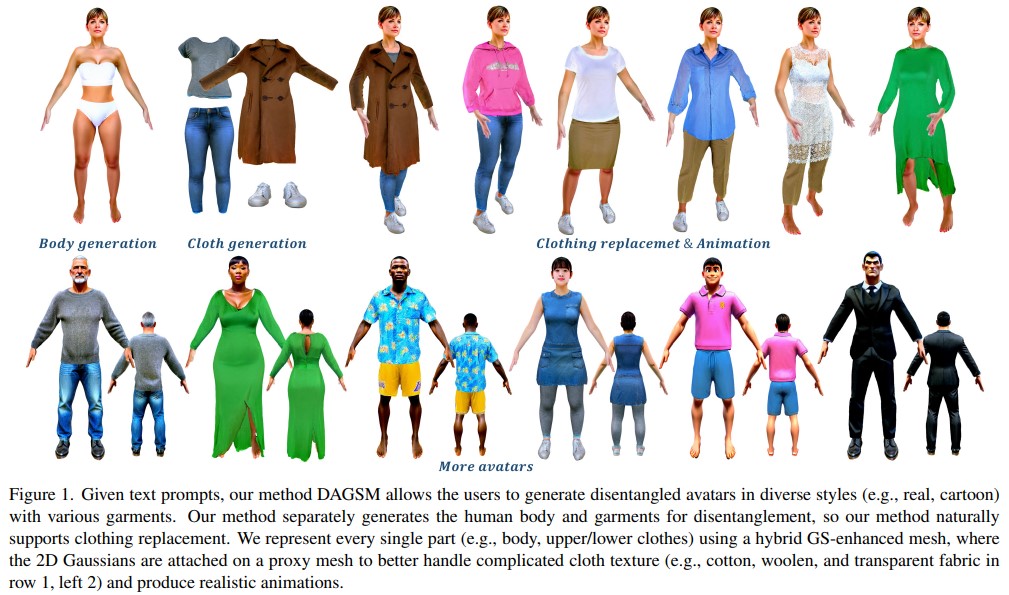

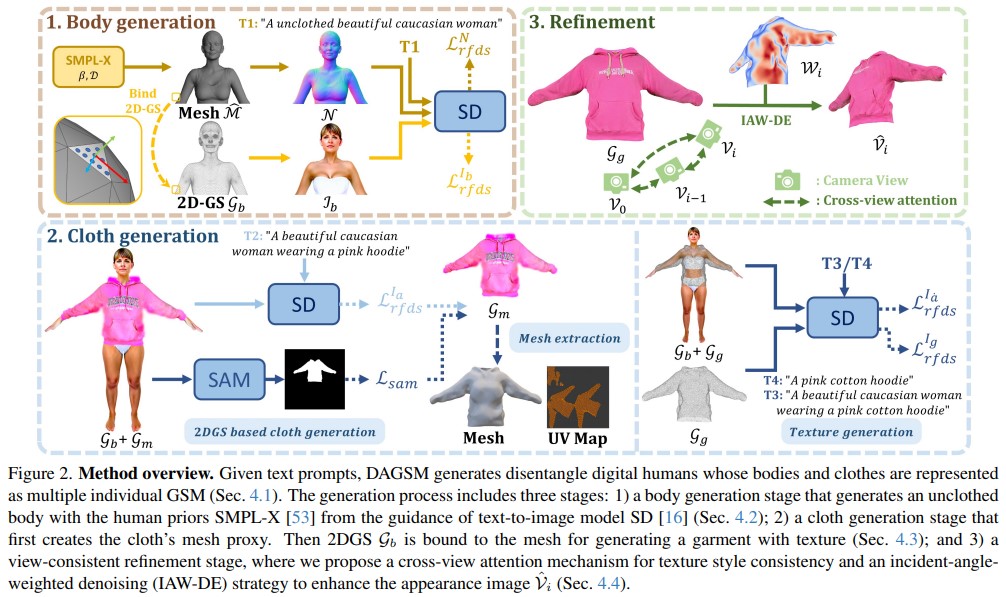

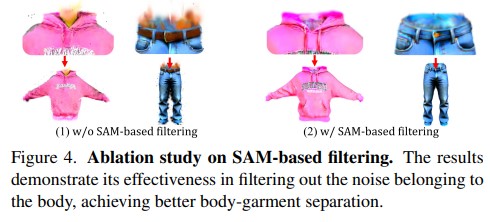

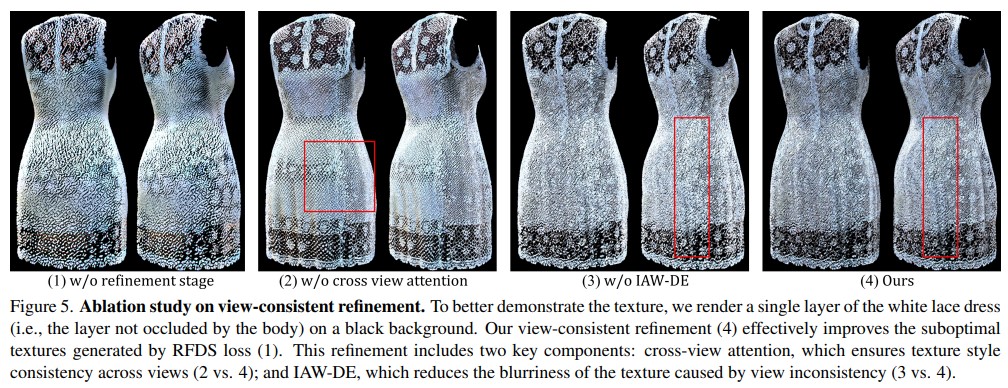

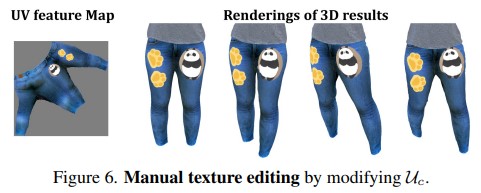

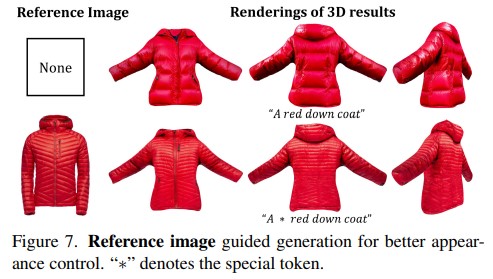

Text-driven avatar generation has gained significant attention owing to its convenience. However, existing methods typically model the human body with all garments as a single 3D model, limiting its usability, such as clothing replacement, and reducing user control over the generation process. To overcome the limitations above, we propose DAGSM, a novel pipeline that generates disentangled human bodies and garments from the given text prompts. Specifically, we model each part (e.g., body, upper/lower clothes) of the clothed human as one GS-enhanced mesh (GSM), which is a traditional mesh attached with 2D Gaussians to better handle complicated textures (e.g., woolen, translucent clothes) and produce realistic cloth animations. During the generation, we first create the unclothed body, followed by a sequence of individual cloth generation based on the body, where we introduce a semantic-based algorithm to achieve better human-cloth and garment-garment separation. To improve texture quality, we propose a view-consistent texture refinement module, including a cross-view attention mechanism for texture style consistency and an incident-angle-weighted denoising (IAW-DE) strategy to update the appearance. Extensive experiments have demonstrated that DAGSM generates high-quality disentangled avatars, supports clothing replacement and realistic animation, and outperforms the baselines in visual quality.

Framework

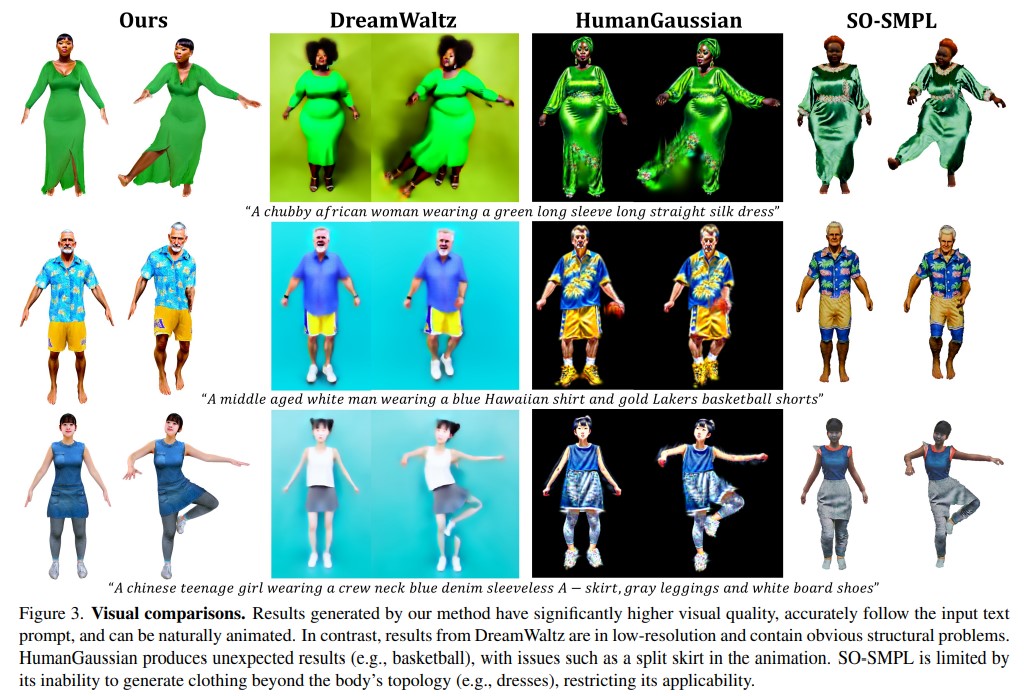

Experiment

Conclusion

In this paper, our proposed DAGSM allows the users to generate digital humans with decoupled bodies and garments from text prompts. We separately generate the human body and garments represented by different GSMs, which is a hybrid representation binding 2DGS with a mesh. Thanks to our disentangled generation pipeline and GSM representation, DAGSM naturally enables clothing replacement and transparent fabric generation, and supports clothing simulation in animation. Extensive experiments demonstrate that DAGSM outperforms existing methods, generating higher visual quality, supporting more features, and enabling more realistic animations. One limitation of DAGSM is that the TSDF algorithm for mesh extraction only reconstructs the garment surface, omitting internal structures like pockets.